They will force you, open source maintainers, to drink the gasoline

Table of contents

They will force you to drink the gasoline

A few weeks back I attended unprompted con in San Francisco. I really enjoyed it overall and will try to make all of them – including the recently teased umprompted.au in Sydney.

I had a lot of great conversations and saw some great presentations but I left with an overwhelming sense that they will force open source maintainers to adopt AI.

There were no fewer than 5 hacking robots presented and with several others in attendance. Google, Anthropic, XBow, Aisle, and ZDI all presented 0-day finding robots and programs. All show great results – as we’ve seen recently with Mozilla’s “The zero-days are numbered” blog post showing vulnerabilities found in partnership with Anthropic.

All of them are finding and reporting vulnerabilities in open-source projects. Real bugs are being found and fixed – it’s great.

Most of the presenters mentioned something about the balance they need to strike between number and quality of discoveries and how they validate and triage the reports. Google mentioned generating suggested fixes as well.

This is all great, but there are two major problems:

- Anyone can make a hacking robot, and

- Not everyone wants AI submissions

Make your own hacking robot

It’s trivial to create your own hacking robot that will gladly find real and imagined vulnerabilities. You just point claude at a C file from an open source library and say “this file has a vulnerability, find it.” It will find it and often the vulnerability will be real.

You can now submit it to the maintainer! Maybe they even have a bug bounty!

Anyone who’s been on a security team is aware of the “beg bounty” problem where you get a bunch of low quality reports for things like your password change policy being out of compliance and then begging for a payment for telling you the “best practices” for passwords is 6-12 random characters and your forms don’t enforce it.

The big firms are investing in validation and triage, not everyone will – either because they don’t know to or the financial motive doesn’t incentivize it.

The triage, suggested fixes, and PRs will be vital but not every fix will be simple. Some will require re-architecting features or whole libraries because they’re structural issues. Maintainers won’t be able to just apply suggested fixes.

Not everyone wants AI submissions

The bigger problem is that not everyone wants AI submissions, even if they’re real bugs. To many people, AI is a non-starter.

I’m a fan of AI, and use it a lot in my own work but I’m cognizant of other’s opinions on it. I’ve seen a few issues and PRs be closed due to disclosed – and suspected – use of AI.

In conversation at unprompted, I asked one of the hacking robot creators what they’d do if they found a ton of vulnerabilities in a project that was against AI. Would they fork the project to maintain it, or would they just sit on a stockpile of 0-days?

After some fence-busting, he eventually said:

“It’s not my job to fix their broken governance models and we would need to publish the vulnerabilities.”

He wasn’t aware of the disclosure wars of the 90s and 2000s nor the full disclosure mailing list.

You will drink from the firehose

After this interaction and a few others, I’m pretty confident a wave of reports and PRs are coming for all open-source projects. Some will be high quality and some won’t. The quality matters but the size of the wave will drown you and I think projects will have to adopt AI themselves to stay above water.

Being an open-source maintainer is already thankless and the work load is about to sky rocket 🚀.

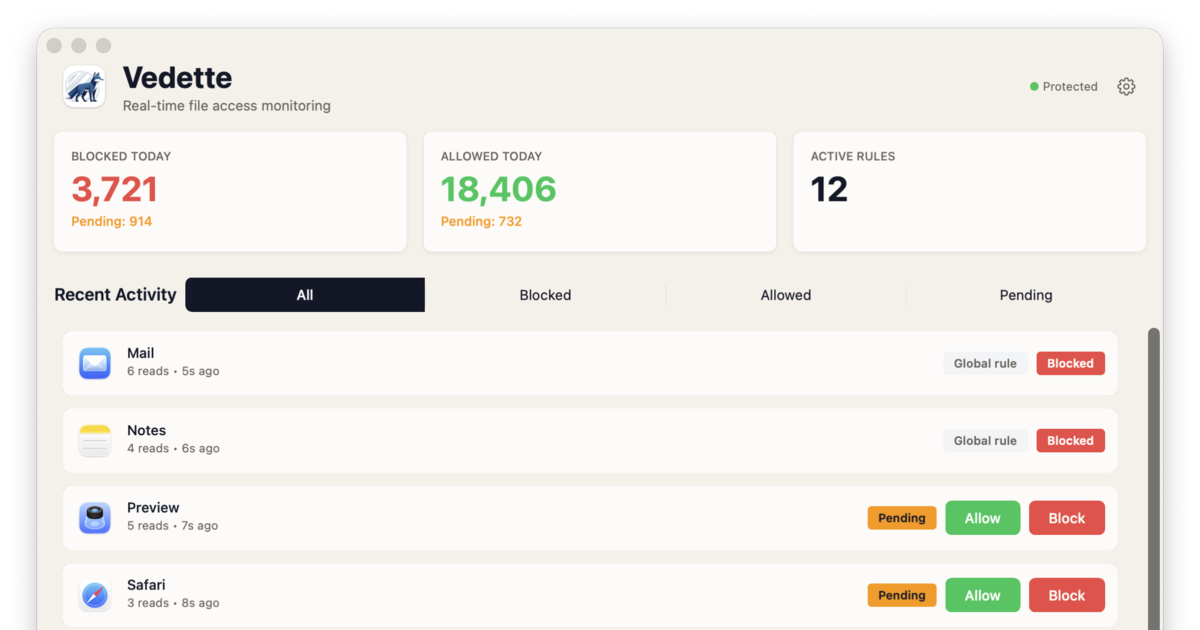

Check out my macOS file access firewall to keep your agents in line and protect your secrets: